Part I of an Applied AI Systems Series

AI is moving quickly. Every few months, the conversation shifts — new models, new abstractions, new frameworks promising leverage. It is easy to stay in theory. It is harder to build something real.

I wanted to understand applied AI quickly. Not by reading. Not by debating architectures. By building.

So I built an app that was built by AI, using AI.

The fastest way I know to learn something is to dive in when time allows it. Lalo Meals became that dive.

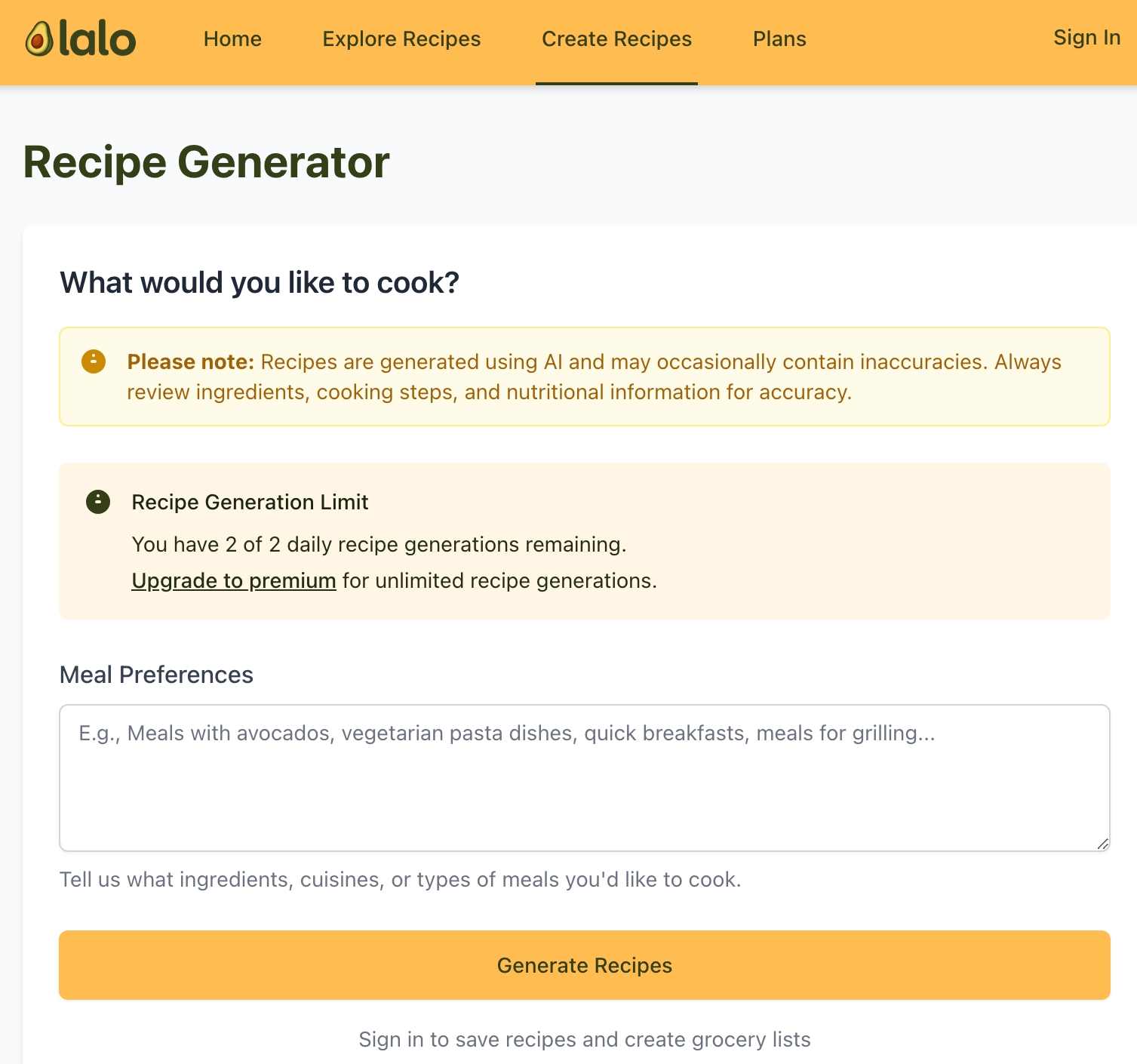

On the surface, it was a personalized meal-planning application powered by large language models. Users could generate meal plans, refine macros, swap ingredients conversationally, and automatically produce consolidated grocery lists.

Underneath, it became a crash course in applied AI systems design.

From Curiosity to Code

The initial goal was simple: generate structured meal plans using large language models.

GitHub Copilot became a collaborator. I leaned heavily into AI-assisted development — letting the model generate scaffolding, refine unit tests, and accelerate iteration. I learned quickly where it excelled and where it hallucinated. Clean abstractions required human intent. Copilot accelerated typing; it did not replace design thinking.

I integrated AWS Lambda for compute, API Gateway for routing, DynamoDB for state persistence, and S3 for asset delivery. GitHub Actions handled deployments and environment separation. Unit tests were not optional — they were essential guardrails in a probabilistic system.

It started as prompt engineering.

It quickly became orchestration.

Large language models are stateless. Users are not. The moment users began iterating — “swap chicken for salmon,” “reduce carbs,” “exclude dairy,” “aggregate groceries across sessions” — the problem space shifted. Generating text was trivial. Maintaining coherence across refinements was not.

Session memory had to be deliberate. Structured outputs had to be enforced. Grocery lists required deterministic post-processing to avoid duplication drift. Persist too much context and costs balloon. Persist too little and the experience degrades.

The model was a component.

Orchestration was the system.

Apple's Ecosystem: A Different Layer of Complexity

The web application evolved into a Progressive Web App first. That part was manageable.

Deploying to Apple's ecosystem was not.

Apple's developer environment introduces a different category of constraints — provisioning profiles, signing certificates, review cycles, and opaque rejections. AI-assisted code generation helped, but it did not eliminate friction. Eventually, I hired a developer with deeper Apple experience to push the iOS build across the finish line.

That was another lesson: AI accelerates capability, but specialized environments still demand specialized expertise.

The application ultimately shipped as a fully functional iOS app on the App Store.

Embedding AI Within the App

The next phase was deeper integration.

I enabled an AI chat interface within the app. Conversations could be summarized. History could be preserved. Skills were introduced to retrieve recipes from a user's saved library. Outputs were structured and versioned. Token usage was monitored. Latency was tracked.

This is where applied AI reveals its real complexity.

Generative models produce probabilistic outputs. Applications demand deterministic behavior. Bridging that gap requires constraints, observability, and post-processing layers that most theoretical discussions omit.

Prompt structures required versioning as carefully as APIs.

Token consumption required cost governance.

Output drift required evaluation.

AI does not reduce the need for engineering discipline. It increases it.

Distribution Does Not Follow Architecture

The system functioned.

Meal plans generated cleanly. Iterative modifications maintained context. Grocery lists were usable. The AI experience felt coherent.

I spent money on marketing — primarily Instagram, Facebook, and TikTok. I experimented with short-form content and paid placements. I tested messaging angles.

User growth did not materialize in any measurable way.

This was perhaps the most important lesson.

Architecture excellence does not eliminate go-to-market risk.

Technical capability does not create distribution. In enterprise environments, this manifests as adoption failure. In startups, it manifests as burn rate pressure.

After several months without meaningful traction, I discontinued my first attempt at a robust, vibe-coded consumer AI app.

That decision was not failure. It was signal.

What Lalo Meals Actually Became

Lalo Meals was not just a consumer product experiment.

It became a contained proving ground for applied AI systems thinking:

- Generative workflows embedded in stateful architectures

- Deterministic guardrails around probabilistic outputs

- Cost-aware orchestration under real usage constraints

- Human-AI interaction boundaries

- Infrastructure minimalism under uncertainty

A consistent pattern emerged.

The durable advantage in AI systems is not the model.

Models will evolve. Providers will change. Costs will fluctuate.

The advantage resides in architecture that anticipates volatility.

If Rebuilt Today

If I rebuilt Lalo Meals today, I would narrow the initial audience faster. I would embed monetization constraints earlier. I would prioritize behavioral loops over feature breadth.

Iteration velocity compounds more reliably than feature expansion.

These are not product observations. They are architectural ones.

Applied AI systems fail less often because of prompt quality and more often because of distribution misalignment, unclear value density, or uncontrolled cost structures.

Why This Series

This is the first entry in a broader Applied AI Systems series.

The objective is not to discuss AI abstractly, but to explore how generative models integrate into real architectures under real constraints — cost, governance, deployment environments, and human behavior.

Future essays will examine:

- State management strategies in generative workflows

- Designing model-agnostic AI gateways

- Observability patterns for probabilistic systems

- Agent orchestration versus deterministic service boundaries

- Cost governance in large-scale LLM integrations

AI will continue evolving rapidly.

Systems thinking must evolve faster.

Lalo Meals was my first proving ground in that evolution.

It will not be the last.